Your Elasticsearch Bill Doesn't Have to Keep Growing

Log Reduction Engine cuts storage costs 50-70% by keeping high-value logs at full fidelity and eliminating repetitive noise at ingestion. Zero observability gaps. Documented ROI before you start.

View Observability Case Studies →The Storage Cost Problem Nobody Budgeted For

Storage Costs Grow 30-50% Per Quarter

As services scale, Elasticsearch log volume grows unbounded. Every new microservice, every additional environment adds terabytes your budget didn't account for.

Blind Deletion Breaks Observability

When costs force action, the default response is blunt: delete old data, reduce retention windows. You save on storage and lose the evidence you need when production breaks.

Manual Retention Policies Miss What Matters

Manual ILM policies can't classify log events by diagnostic value, can't detect anomalies worth preserving, and can't project savings before implementation.

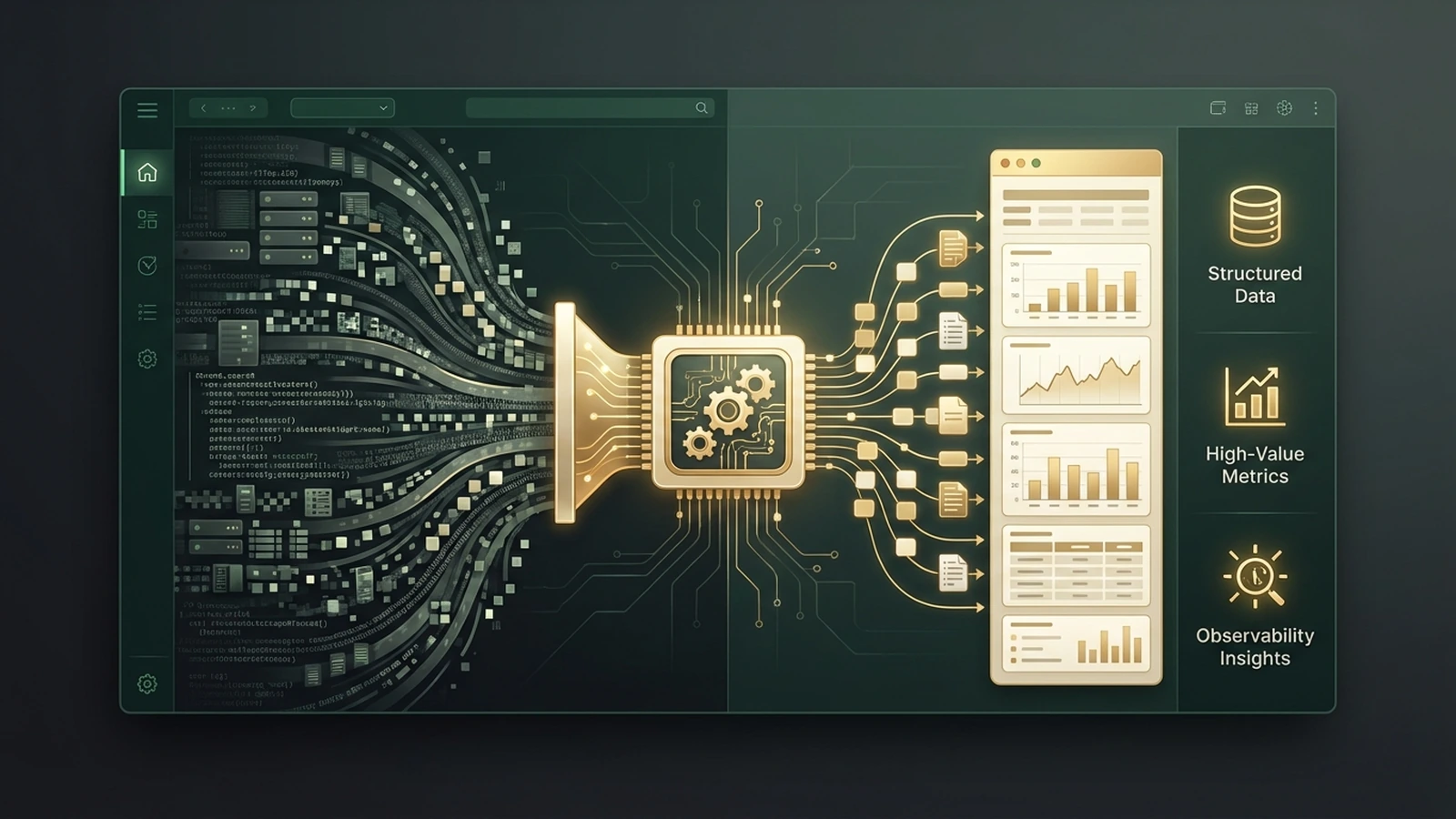

How Log Reduction Engine Works

Analyze

Scans your existing log streams and classifies every event by diagnostic value — anomaly-bearing, diagnostic, repetitive, or informational.

Configure

Sampling rules set per log type. High-value events kept at full fidelity. Repetitive success events sampled or aggregated. You control the rules.

Forecast

The cost forecasting engine projects your post-implementation storage volume and cost savings using your actual log data.

Deploy

Ingest pipeline processors deploy into your existing Elasticsearch pipeline. Log reduction runs automatically. A live dashboard shows savings in real time.

Raw logs in → ML classification → intelligent sampling → reduced storage, full observability

See Your Savings Before You Commit

The cost forecasting engine analyzes your current Elasticsearch log streams — volume, composition, redundancy patterns — and projects your post-reduction storage footprint. No estimates. No benchmarks from other companies. Your data, your projected savings, before implementation begins.

Six Capabilities That Cut Storage Without Cutting Visibility

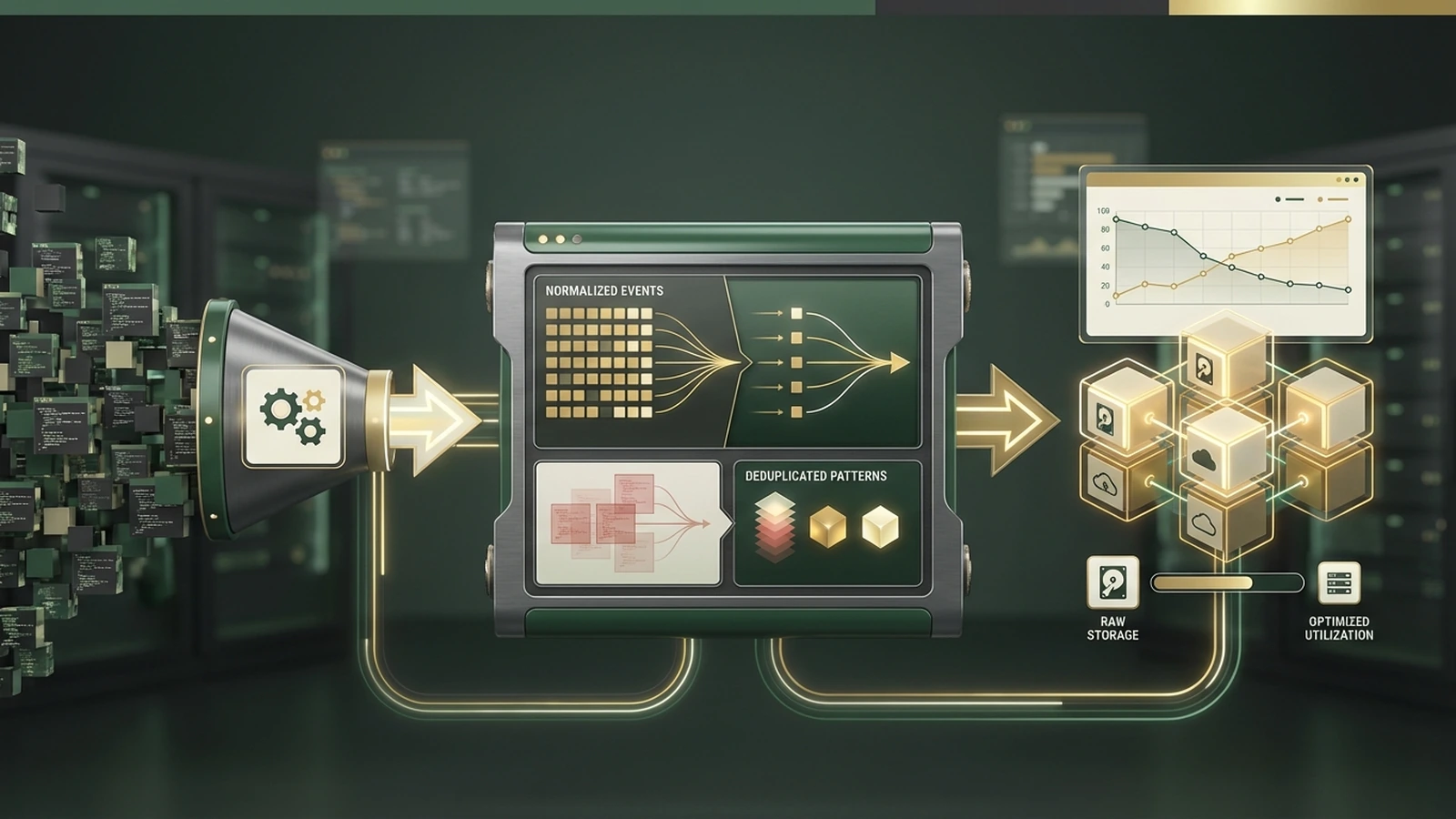

Intelligent Log Sampling

Keeps anomalies, errors, and slow operations at full fidelity. Samples repetitive success events that consume storage without adding diagnostic value.

Cardinality Reduction

Collapses high-cardinality field values — dynamic URLs, session IDs, request tokens — that inflate index size without adding insight.

Cost Forecasting

Pre-implementation analysis projects storage savings with specificity. See your expected ROI before any changes are deployed.

Per-Log-Type Retention

Different retention rules for different log types. Error logs retained longer. Debug logs sampled aggressively. Granular control replaces blunt retention.

Anomaly Preservation Guarantee

ML-based anomaly detection ensures anomalous events are never sampled out. Incident investigation capability stays intact by design.

Live Cost Dashboard

Real-time Kibana dashboard shows storage consumption before and after, cost savings per day, and sampling efficiency metrics.

Documented Results From Production Deployments

Before Log Reduction Engine

2.4TB of logs. $18K/month storage cost. 70% repetitive success events consuming storage. Cost trajectory unsustainable.

After Log Reduction Engine

800GB of high-value data. $6K/month storage cost. Error logs, anomalies, and slow operations at full fidelity. Same alert coverage, documented savings.

Where Log Reduction Engine Fits

Log Reduction Engine is a primary deliverable in Observability Modernization Sprint engagements alongside Topology Builder and Alarm Noise Suppression. Available standalone with custom implementation and dedicated cost forecasting analysis.

15+ Production Deployments. 50-70% Storage Reduction. Documented.

“Reduced Elasticsearch storage from 2.4TB to 800GB. Monthly costs dropped from $18K to $6K.”

Common Questions

Stop Paying for Logs Nobody Reads

Log Reduction Engine shows you the savings before you commit. Documented 50-70% storage cost reduction across 15+ production deployments.

View Observability Case Studies →