You Are Debugging Blind

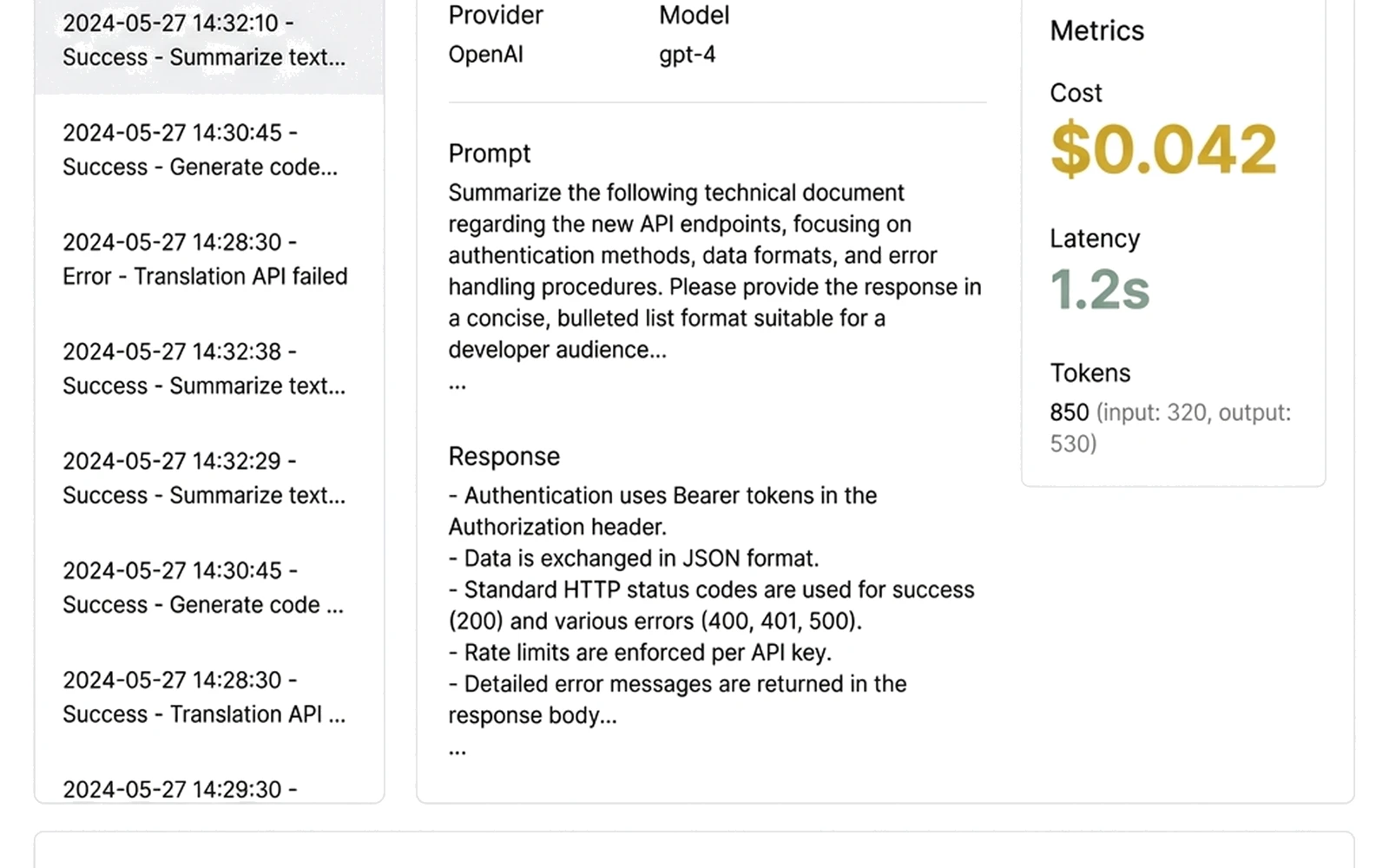

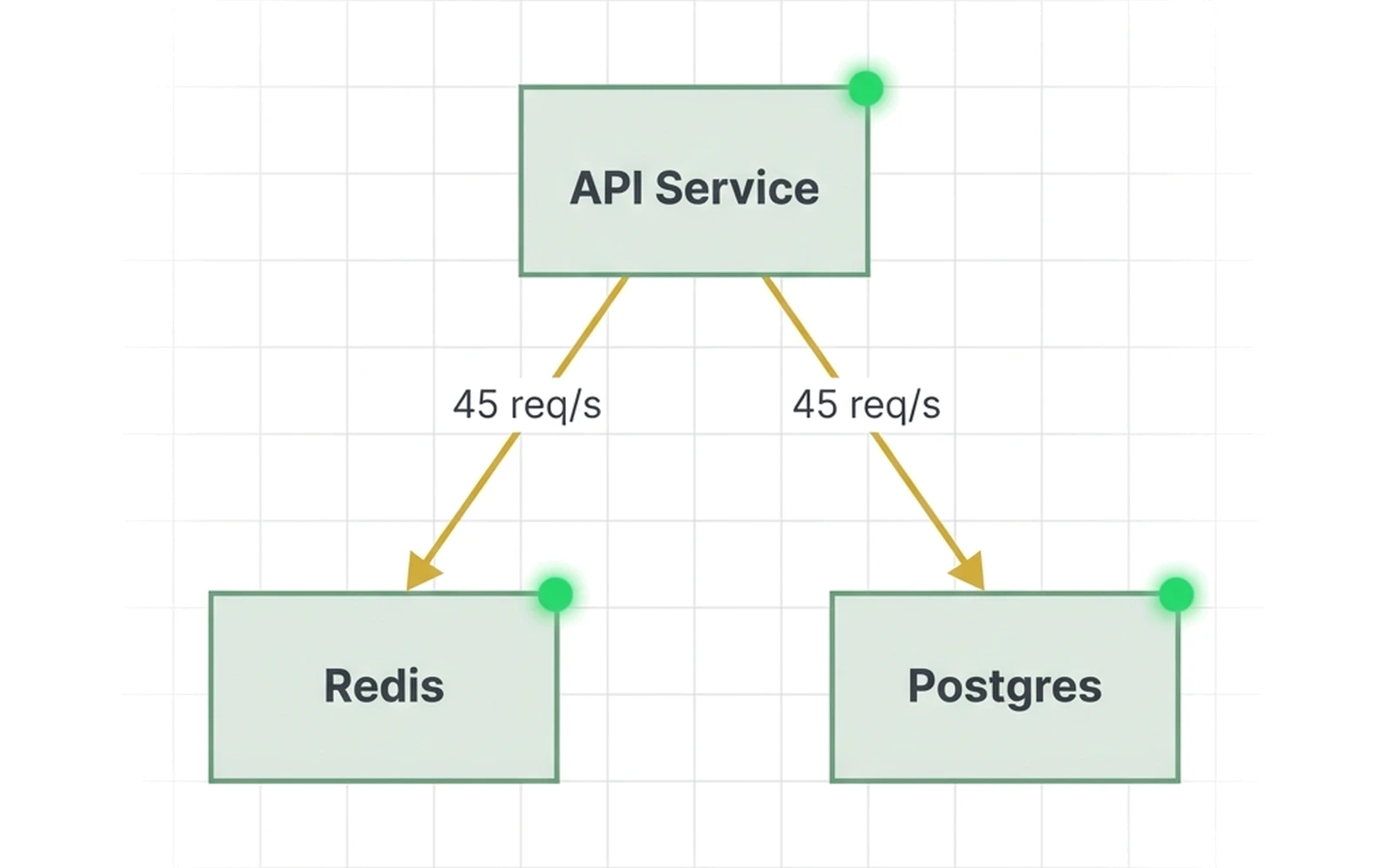

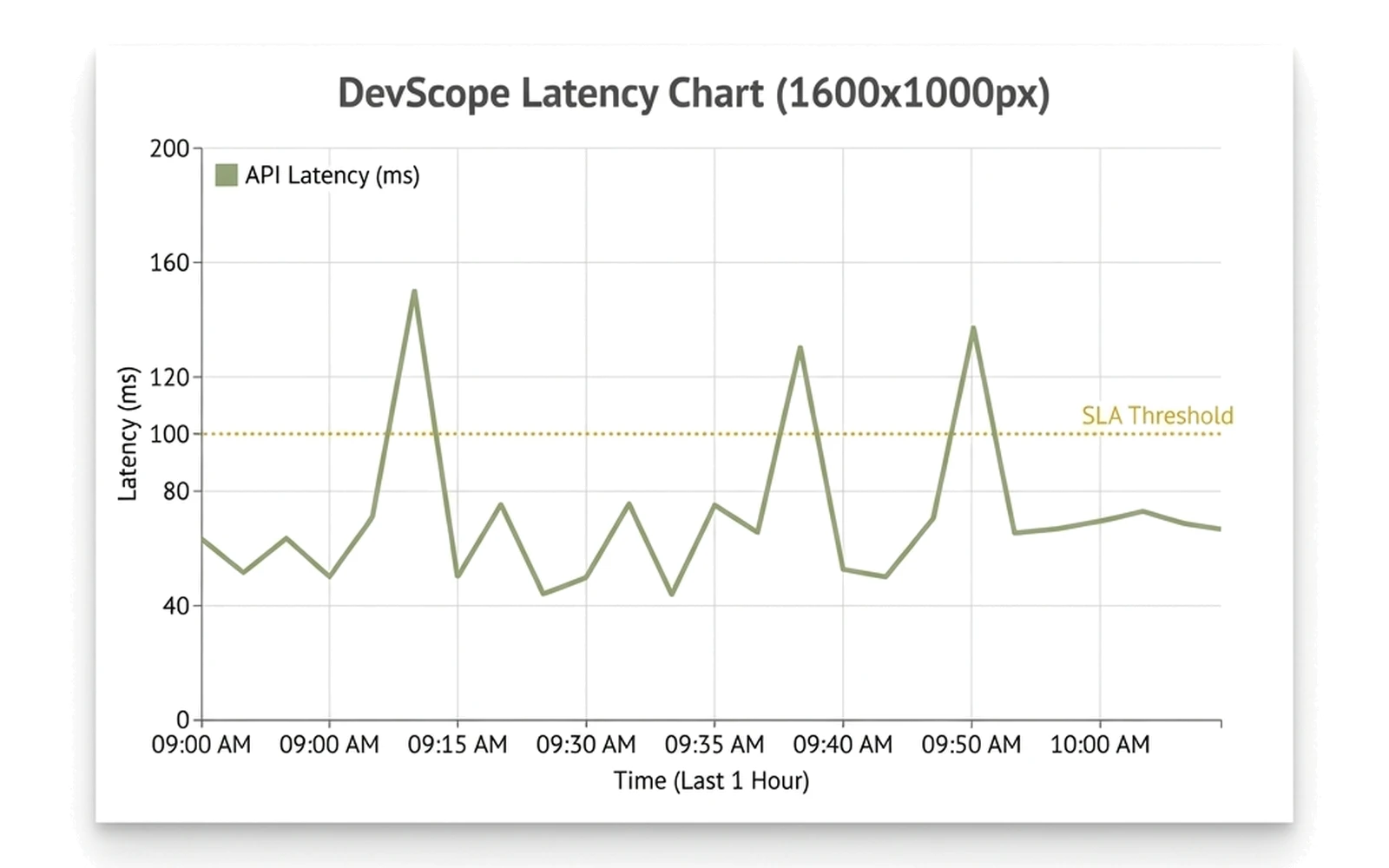

You cannot observe LLM calls, microservices, or API requests locally. “Works on my machine” is a guess, not a fact. Your local dev environment gives you zero visibility into what your code actually does at runtime.

“I can’t see what my LLM calls are doing until I ship to staging.”

See how DevScope solves this